2 min read

What is Google Search Generative Experience? (SGE)

What is Google SGE? Think of Google SGE as your helpful buddy on the search results page. Instead of making you click on different websites, it pulls...

4 min read

.jpg) Honcho

:

Apr 17, 2019 1:30:39 PM

Honcho

:

Apr 17, 2019 1:30:39 PM

Do you have a local SEO project that involves creating multiple NAP listings in Google My Business (GMB) or local landing pages for localised organic search? If so, then you will want to know what are the most potentially profitable keywords to target. That’s exactly what you’ll learn by reading this blog post.

It is also important to target densely populated areas to gain the most reach, for the least amount of work.

Local SEO on a large scale can take considerable time and effort. In the UK there are 69 cities. That’s potentially up to 69 local pages that would need to be created. But well worth it if you consider that more than 91% of the population will live in cities by 2020 according to the Guardian.

But if you’re thinking of creating 69 pages to target one keyword, you will want to be very certain that it is a keyword that lots of people actually search for and that the keyword triggers localisation.

Only certain keywords trigger localisation in the search results. It is important to start with as wide a selection of relevant keywords as possible, so you can prioritise your local targeting based around the best search volume.

Honchō specialise in automotive and retail. As such, local SEO, in particular, is super important. For example, car dealerships attract local footfall so local SEO is needed to catch the local users online, as well. Honchō has a highly in depth analysis of how car dealerships can win at SEO.

Just to clarify: local SEO works on two levels. Firstly it triggers a local pack (map + three local listings directly beneath). Secondly, it triggers a bias to showing normal organic search results that mention the searcher’s location, or a nearby location, somewhere on the page.

In order to capitalise on the potential benefits of local SEO traffic, you’ll need to first get a list of search queries that trigger ‘localisation’ in Google.

You could check each keyword manually in Google, but with thousands of keywords that could take forever. That’s where the method I’m about to teach you will come in really handy.

For demonstration purposes, we’ll pretend we are doing SEO for a chain of hospitals.

We have noticed that the search term ‘cosmetic surgery’ triggers localisation, while ‘knee surgery’ does not. It is therefore not always clear which keywords will trigger localisation.

There is no foolproof way to predict localisation 100%, particularly for keywords that do not mention the location name in them. This is because much of the local algorithm is down to user metrics. Basically, Google monitors know how users behave on a query by query basis and so uses this behaviour data to decide what users want in each case.

Ultimately what you need is a huge list of words, such as ‘cosmetic surgery’, that trigger localisation, so that we can build some local landing pages and GMB profiles to capture the traffic from these search terms traffic.

The method for finding local key words starts with a keyword list. There are numerous ways to get a keyword list:

Now that you’ve got your keyword list, it’s time to find out which ones trigger localisation.

The first step in the process is to turn your keywords into Google URLs

Now you should have a full list of Google search queries for each keyword in your list.

Finally, we need to run these through Screaming Frog to identify which ones trigger a map.

Firstly, set the Screaming Frog user agent string to Firefox so that it matches the ‘client’ specified in our column C URL. This can be achieved by setting a custom user agent in Screaming Frog and pasting in the following: Mozilla/5.0 (Windows NT 6.1; WOW64; rv:40.0) Gecko/20100101 Firefox/40.1

A custom user agent can be set in Configuration>HTTP Header>User-Agent

The next thing to do is to set up a Search within Screaming Frog. You can do this by going to: Configuration>Custom>Search

In this case, what we want to search for is a web page feature that only appears when a map is present.

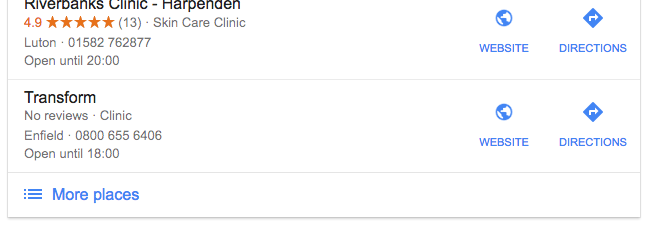

During the process, I chose to search for the ‘More places’ link that appears at the bottom left of the local pack box

So I set my search for: >More places

It’s worth noting that Google sometimes changes things on their page. If the above search value doesn’t work, you can use inspect element to find some other boilerplate element to search for.

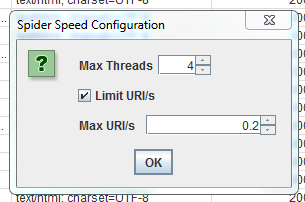

Now we should set the Screaming Frog crawl speed quite modestly so it doesn’t trigger a captcha. I set mine to 4 threads and 0.2 URI/s and it worked very well. If you have a lot of URLs you might want to leave your crawl running over overnight. I am not sure what the threshold is for the captcha to be triggered.

Now you should select List Mode in Screaming Frog and paste all your URLs from column D into Screaming Frog, to be crawled.

You should be able to see all the URLs returning a 200 response code as they are being crawled. If they are not, you may need to make some adjustments to the Google URL.

While it is crawling, spot-check your custom tab to see that what is being flagged does, in fact, have a map present. If it doesn’t, you may need to make some adjustment to the search value in Screaming Frog.

Once the crawl is complete, you can export the custom tab. This is the list of all the URLs that trigger localisation. Now you can V-lookup them against your initial list, or do a ‘find and replace’ to turn the Google URLs back into keywords.

You can dump this keyword list into Keyword Keg or Keyword Planner to get search volumes and then add these into your excel sheet to help you to prioritise which local pages are the most valuable. Alternatively, you can do some additional keyword research around the keyword list to ensure that you’re your pages will be targeting the most popular version of your keyword and to help maximise the reach of the pages.

If needed, we can supply training around these local SEO elements or if it sounds too much, get in touch and we can discuss how we can help you with the project overall.

2 min read

What is Google SGE? Think of Google SGE as your helpful buddy on the search results page. Instead of making you click on different websites, it pulls...

5 min read

Discover the power of high search volume keywords and how to effectively use them to boost your online presence and drive maximum impact.

2 min read

We're delighted to officially announce our partnership with Eflorist, one of the world’s leading flower delivery brands with over 54,000 local flower...

One of the first ports of call for any SEO strategy should be keyword research. Without knowing what you want to rank for and what the public are...

1 min read

Just over 10 years ago a small team from Google added the phrase ‘see your ad here’ alongside their search results. In just a few minutes the first...

We live in an age where e-commerce is not only growing at an unprecedented rate, but it’s also re-shaping the retail industry as we know it. Failing...